What Enshittification Means for NGOs and Not-for-Profits

And what you can do to mitigate the risks of using these platforms.

by Martin Horn

Your organic posts don’t reach your audience anymore. Your own feeds are full of content you didn’t ask for and don’t really want to look at. Search engines keep feeding you AI generated responses that seem wrong half the time. You wonder, is it just me, or is this happening to everyone? And if it is happening to everyone, does that mean your organization’s communications are getting lost in a sea of noise?

Many large digital platforms (think Meta and Google, and any number of other platforms, social and otherwise) show a recurring pattern of declining user experience over time. This decline has accelerated in the last few years and has been further intensified by the introduction of generative AI. Issues include low quality AI generated search responses and an increase in low value content across social platforms. That said, this pattern predates generative AI, with changes to social feeds, discovery algorithms and diminishing organic reach over a period of years. For mission-driven organizations, this shift is especially damaging. Their greatest strength has traditionally been the willingness of partners, service users, and community members to share their work organically. As organic reach declines and feeds become crowded with ads, ‘suggested’ posts, and AI slop, NGOs and not-for-profits are placed at a growing disadvantage. Messages that once traveled through networks of trust now struggle to surface at all, making it harder for these organizations to build awareness, mobilize communities, and advance their missions without paid promotion.

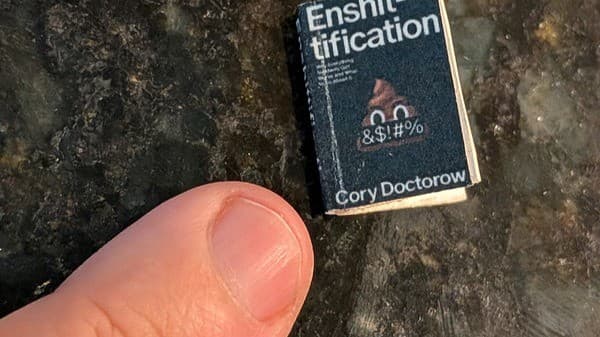

Writer Cory Doctorow coined the term enshittification, to describe this process of platform deterioration. While the word itself is recent, the underlying pattern, in which there is a gradual decline in the quality of a platform when decision making becomes primarily driven by shareholder value, is not. In practice, enshittification often includes the removal or degradation of features, increased reliance on automated moderation systems, prioritization of AI generated content, and more aggressive advertising and user data extraction.

The recent enshittification of Reddit is a great example of this process. Reddit had functioned as a largely user-run network of semi autonomous communities, heavily reliant on the volunteer labour of subreddit moderators. Over time, advertising increased, algorithmic ranking began to affect discoverability, and access to the API was restricted. This limited the third-party moderation tools many moderators relied on and pushed users toward the official app environment. These changes increased monetization opportunities while reducing community control.

For advertisers, this pattern can look attractive in theory, as available inventory grows, but signal quality declines. Context becomes noisier, brand safety is harder to manage, and engagement metrics may reflect conflict or volatility rather than sustained interest or trust. Advertisers also have less control over what kinds of content appears alongside their ads. Beyond brand safety, this creates a deeper problem. Advertising on social platforms works in part because of context. In an enshittified feed, context begins to collapse, making it less clear that niche or interest-based advertising will continue to perform as expected. Many advertisers have already seen performance decline, in part for this reason.

For advertisers, the result is rising costs and diminishing returns. For NGOs and nonprofit organizations, the risks are compounded. These organizations depend on trust, safety, and long-term relationships. Increasing platform volatility and declining effectiveness make reliance on these systems riskier, both in terms of the effectiveness of an advertising spend or organic social media initiative, but also in terms of brand equity. There is also an ethical consideration for mission-driven organizations using social media platforms. Using them can be interpreted as supporting systems which are not neutral and whose leadership priorities may not align with your organization’s mission.

Doctorow argues that meaningful solutions require structural change, including antitrust enforcement, mandated interoperability, protection of user modification rights (right to repair and right to tinker), and regulation of dominant platforms. In the meantime, organizations can reduce risk by diversifying channels, investing in owned media, focusing on long term engagement rather than short term performance metrics, incorporating ethical criteria into media planning, and communicating clearly with leadership about the limits of performance within degraded systems.

More broadly, organizations need to stop thinking about engagement in the abstract and start thinking about community-building. Many nonprofits already understand this intuitively. Their strength lies in their stakeholders, not in their on-platform followings. This is not necessarily about abandoning platforms entirely, though in some cases that may be appropriate. It is about understanding their limitations and hedging against a near term future in which they can no longer be relied upon to deliver the services they claim are central to their value.

Have a difficult communications problem? Reach out, we’re always happy to chat.